Practice Free AZ-305 Exam Online Questions

You plan to migrate data to Azure.

The IT department at your company identifies the following requirements:

✑ The storage must support 1 PB of data.

✑ The data must be stored in blob storage.

✑ The storage must support three levels of subfolders.

✑ The storage must support access control lists (ACLs).

You need to meet the requirements.

What should you use?

- A . a premium storage account that is configured for block blobs

- B . a general purpose v2 storage account that has hierarchical namespace enabled

- C . a premium storage account that is configured for page blobs

- D . a premium storage account that is configured for files shares and supports large file shares

B

Explanation:

Microsoft recommends that you use a GPv2 storage account for most scenarios. It supports up to 5 PB, and blob storage including Data Lake storage.

Note: A key mechanism that allows Azure Data Lake Storage Gen2 to provide file system performance at object storage scale and prices is the addition of a hierarchical namespace. This allows the collection of objects/files within an account to be organized into a hierarchy of directories and nested subdirectories in the same way that the file system on your computer is organized. With a hierarchical namespace enabled, a storage account becomes capable of providing the scalability and cost-effectiveness of object storage, with file system semantics that are familiar to analytics engines and frameworks.

Reference:

https://docs.microsoft.com/en-us/azure/storage/common/storage-account-overview

https://docs.microsoft.com/en-us/azure/storage/blobs/data-lake-storage-namespace

You have an Azure Active Directory (Azure AD) tenant that syncs with an on-premises Active Directory domain.

You have an internal web app named WebApp1 that is hosted on-premises. WebApp1 uses Integrated Windows authentication.

Some users work remotely and do NOT have VPN access to the on-premises network.

You need to provide the remote users with single sign-on (SSO) access to WebApp1.

Which two features should you include in the solution? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

- A . Azure AD Application Proxy

- B . Azure AD Privileged Identity Management (PIM)

- C . Conditional Access policies

- D . Azure Arc

- E . Azure AD enterprise applications

- F . Azure Application Gateway

AE

Explanation:

A: Application Proxy is a feature of Azure AD that enables users to access on-premises web applications from a remote client. Application Proxy includes both the Application Proxy service which runs in the cloud, and the Application Proxy connector which runs on an on-premises server.

You can configure single sign-on to an Application Proxy application.

C: Microsoft recommends using Application Proxy with pre-authentication and Conditional Access policies for remote access from the internet. An approach to provide Conditional Access for intranet use is to modernize applications so they can directly authenticate with AAD.

Reference:

https://docs.microsoft.com/en-us/azure/active-directory/app-proxy/application-proxy-config-sso-how-to

https://docs.microsoft.com/en-us/azure/active-directory/app-proxy/application-proxy-deployment-plan

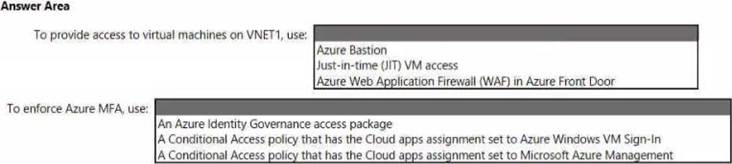

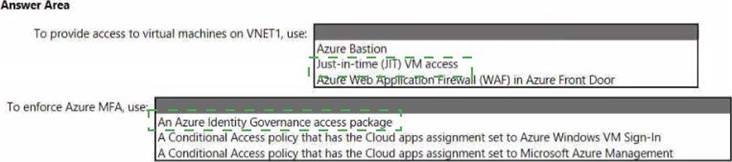

HOTSPOT

You have an Azure subscription that contains a virtual network named VNET1 and 10 virtual machines. The virtual machines are connected to VNET1.

You need to design a solution to manage the virtual machines from the internet.

The solution must meet the following requirements:

• Incoming connections to the virtual machines must be authenticated by using Azure Multi-Factor Authentication (MFA) before network connectivity is allowed.

• Incoming connections must use TLS and connect to TCP port 443.

• The solution must support RDP and SSH.

What should you Include In the solution? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

HOTSPOT

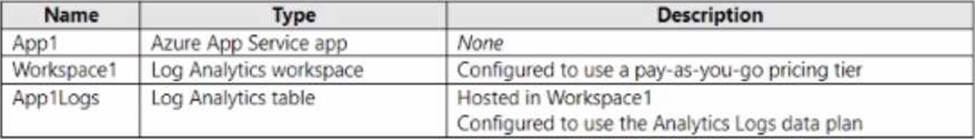

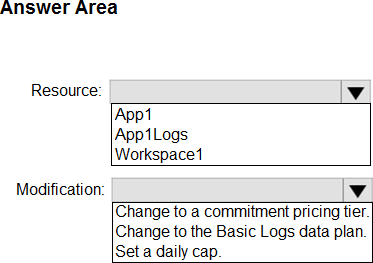

You have an Azure subscription that contains the resources shown in the following table.

Log files from App1 are ingested to App 1 Logs. An average of 120 GB of log data is ingested per day.

You configure an Azure Monitor alert that will be triggered if the App1 logs contain error messages.

You need to minimize the Log Analytics costs associated with App1.

The solution must meet the following requirements:

• Ensure that all the log files from App1 are ingested to App 1 Logs.

• Minimize the impact on the Azure Monitor alert.

Which resource should you modify, and which modification should you perform? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

Your company has deployed several virtual machines (VMs) on-premises and to Azure. Azure ExpressRoute has been deployed and configured for on-premises to Azure connectivity.

Several VMs are exhibiting network connectivity issues.

You need to analyze the network traffic to determine whether packets are being allowed or denied to the VMs.

Solution: Install and configure the Microsoft Monitoring Agent and the Dependency Agent on all VMs. Use the Wire Data solution in Azure Monitor to analyze the network traffic.

Does the solution meet the goal?

- A . Yes

- B . No

B

Explanation:

Instead use Azure Network Watcher to run IP flow verify to analyze the network traffic.

Note: Wire Data looks at network data at the application level, not down at the TCP transport layer.

The solution doesn’t look at individual ACKs and SYNs.

Reference:

https://docs.microsoft.com/en-us/azure/network-watcher/network-watcher-monitoring-overview

https://docs.microsoft.com/en-us/azure/network-watcher/network-watcher-ip-flow-verify-overview

You are designing a solution that calculates 3D geometry from height-map data.

You need to recommend a solution that meets the following requirements:

• Performs calculations in Azure.

• Ensures that each node can communicate data to every other node.

• Maximizes the number of nodes to calculate multiple scenes as fast as possible.

• Minimizes the amount of effort to implement the solution.

Which two actions should you include in the recommendation? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

- A . Create a render farm that uses virtual machine scale sets.

- B . Enable parallel file systems on Azure.

- C . Create a render farm that uses virtual machines.

- D . Enable parallel task execution on compute nodes.

- E . Create a render farm that uses Azure Batch.

You are designing a solution that calculates 3D geometry from height-map data.

You need to recommend a solution that meets the following requirements:

• Performs calculations in Azure.

• Ensures that each node can communicate data to every other node.

• Maximizes the number of nodes to calculate multiple scenes as fast as possible.

• Minimizes the amount of effort to implement the solution.

Which two actions should you include in the recommendation? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

- A . Create a render farm that uses virtual machine scale sets.

- B . Enable parallel file systems on Azure.

- C . Create a render farm that uses virtual machines.

- D . Enable parallel task execution on compute nodes.

- E . Create a render farm that uses Azure Batch.

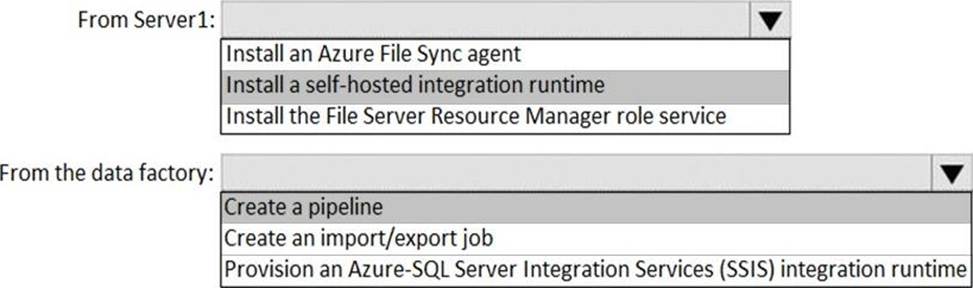

HOTSPOT

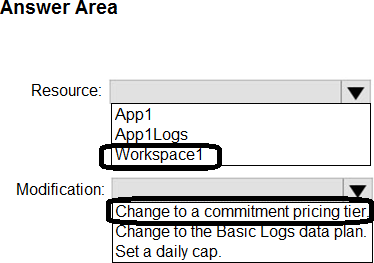

Your on-premises network contains a file server named Server1 that stores 500 GB of data.

You need to use Azure Data Factory to copy the data from Server1 to Azure Storage.

You add a new data factory.

What should you do next? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

Explanation:

Box 1: Install a self-hosted integration runtime

The Integration Runtime is a customer-managed data integration infrastructure used by Azure Data Factory to provide data integration capabilities across different network environments.

Box 2: Create a pipeline

With ADF, existing data processing services can be composed into data pipelines that are highly available and managed in the cloud. These data pipelines can be scheduled to ingest, prepare, transform, analyze, and publish data, and ADF manages and orchestrates the complex data and processing dependencies

References:

https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/move-sql-azure-adf

https://docs.microsoft.com/pl-pl/azure/data-factory/tutorial-hybrid-copy-data-toolsyu31svc 3 months, 4 weeks ago

https://docs.microsoft.com/en-us/azure/data-factory/create-self-hosted-integration-runtime?tabs=data-factory

"A self-hosted integration runtime can run copy activities between a cloud data store and a data store in a private network"

https://docs.microsoft.com/en-us/azure/data-factory/introduction

"With Data Factory, you can use the Copy Activity in a data pipeline to move data from both on-premises and cloud source data stores to a centralization data store in the cloud for further analysis"

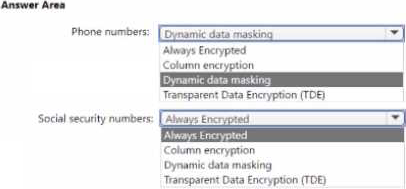

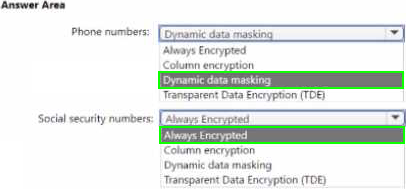

HOTSPOT

You have an Azure subscription. The subscription contains an Azure SQL managed instance that stores employee details, including social security numbers and phone numbers.

You need to configure the managed instance to meet the following requirements:

• The helpdesk team must see only the last four digits of an employee’s phone number.

• Cloud administrators must be prevented from seeing the employee’s social security numbers.

What should you enable tor each column in the managed instance? To answer select the appropriate options in the answer area. NOTE; Each correct selection is worth one point

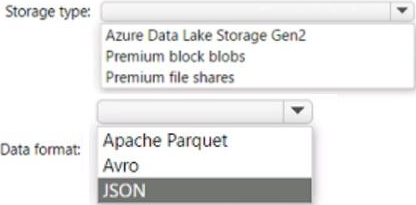

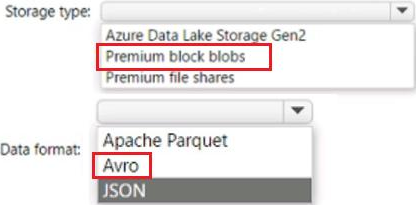

HOTSPOT

You have an app that generates 50,000 events daily.

You plan to Stream the events to an Azure event hub and use Event Hubs Capture to implement cold path processing of the events Output Of Event Hubs Capture will be consumed by a reporting system.

You need to identify which type of Azure storage must be provisioned to support Event Hubs Capture, and which inbound data format the reporting system must support.

What should you identity? To answer. select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.